Why is it important to understand how second language acquisition work?

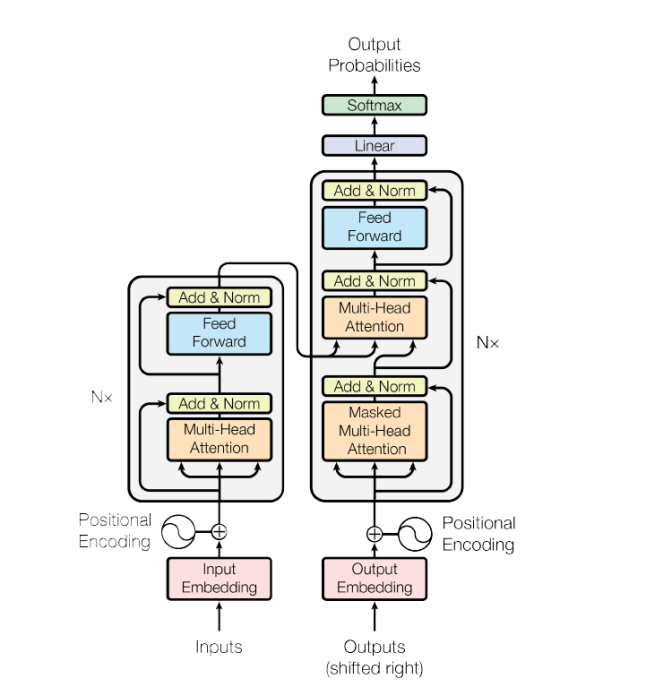

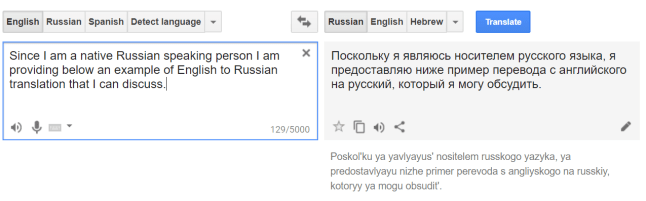

Nowadays, we live in a world that is more interconnected than ever before. The Internet, including social media made communication as instant as possible. This in turn opened an opportunity for communication with people who speak different languages. But the slight complication is that in order to be able to speak with someone who knows a different language than you, there is a need to learn that new language. This will account to second language acquisition (SLA). Surely, the technology found a work around this problem using Machine Language Translation. At first machine translation was phrase based and the results were not that good. Then came the turn of statistical method in machine translation. And finally in November 2016 Google launched end to end Neural Machine Translation based on Artificial Neural Networks now known as Deep Learning. The results of this new approach were quite impressive, in comparison to struggling previous approaches. If until now you haven’t used this service, then try and see for yourself. Since I am a native Russian speaking person I am providing below an example of English to Russian translation that I can discuss.

The link for this specif translation is here. I think any Russian speaking person would agree with me that this machine translation is grammatically correct and sounds fine.

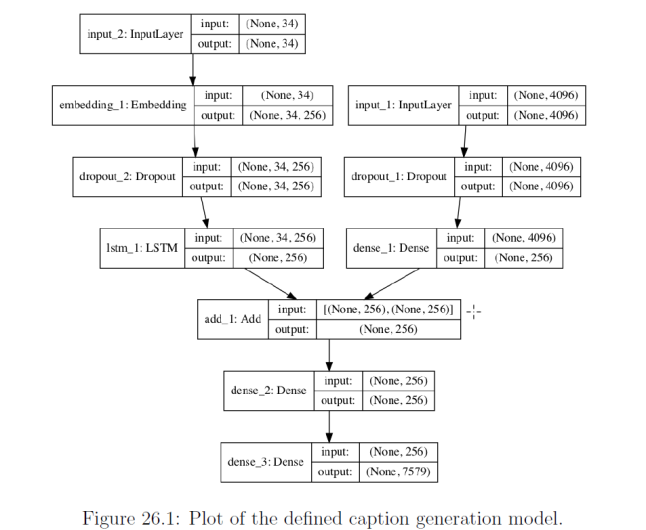

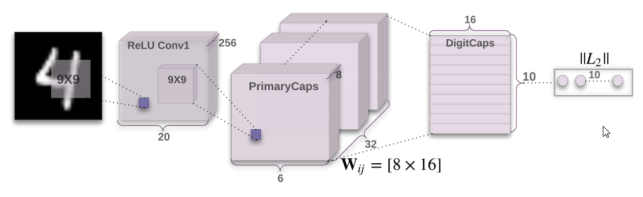

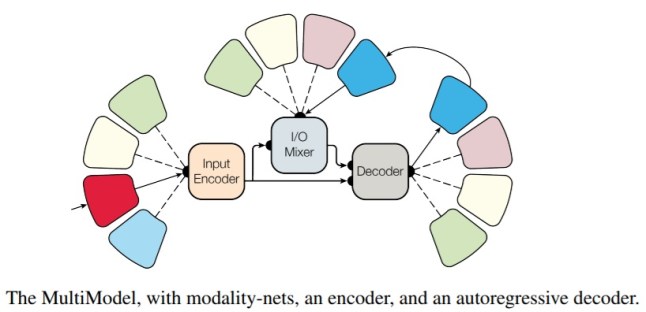

Then back to the subject of SLA. It seems to me that looking into how Deep Learning techniques and models that are now used for Natural Language Processing (NPL), such as Word Embedding introduced by Tomas Mikolov and Long Short-Term Memory (LSTM) networks which are a special case of Recurrent Neural Networks work, may be very useful in tackling SLA. These and other approaches that are employed to tackle machine translation may shed the light into some aspects of first and second language acquisition in humans. Even though, currently used neural networks are based largely on an oversimplified and superficial model of a neuron, dating back to Perception introduced in 1960s, the successes of such methods cannot be easily dismissed. Why is that?

The notion ”probability of a sentence” is an entirely useless one, under any known

interpretation of this term. (Chomsky, 1969)

In recent years thanks to the advances in Graphical Processing Units (GPU) capabilities and introduction of new architectures and methods in Artificial Neural Networks now known as Deep learning, one of the long standing challenges namely Machine Language Translation seemed like to give up. The title above that belongs to Noam Chomsky the founder of Generative Grammar and Generative Linguistics may be finally proclaimed as wrong and Generative grammar theory may be seen as proven being incorrect by successes of Recurrent Neural Networks based on statistical methods machine translation. Then if Generative Grammar is not that useful to model first or second language acquisition in humans what else is? In following parts I’ll provide my suggestions of what approaches may be more efficient in modeling natural language. And as it isn’t hard to guess Deep Learning may provide at least a partial answer.

References

- Tomas Mikolov PhD Thesis, ‘STATISTICAL LANGUAGE MODELS BASED ON NEURAL NETWORKS‘

- Peter Norvig, ‘On Chomsky and the Two Cultures of Statistical Learning‘